Specifically the esram, it sounds like it was a solution they had to settle with as opposed to what they would have liked to have accomplished with more time.

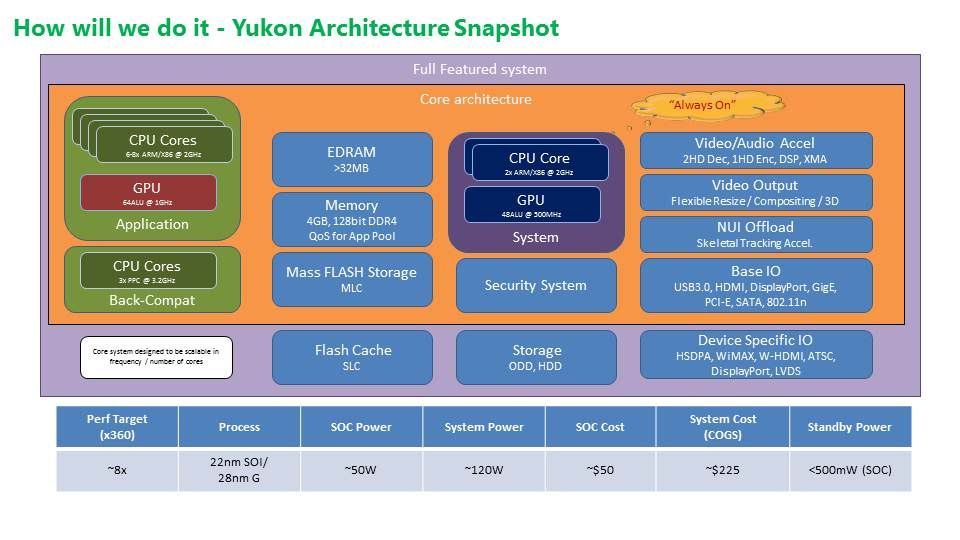

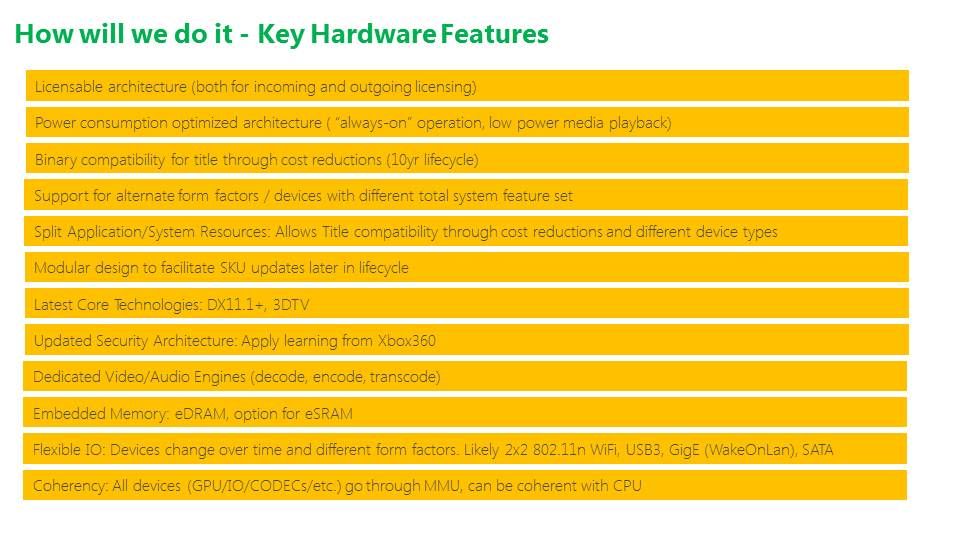

No. The eSRAM was in the design prior to the 8GB of RAM or DDR3. You can check the Yukon leak for proof of this. It's intended specifically as a natural evolution of the 360's eDRAM. It's not, nor was it ever, considered a bandaid. It was designed to use eSRAM because it gives the same benefits as any other high bandwidth setup without most of the downsides (cost, manufacturing flexibility).

The other thing I gathered was that because they have an upscaler, high definition at the native performance level wasn't important to them. And that really blows, because assuring that the X1 could do 1080@60fps natively would have given devs more headroom than they are now afforded.

Again, no. They intelligently realized that we are deep into the HD era now where pixel counts aren't necessarily important to the end result on screen in terms of visual fidelity to the user. On PC it matters as you sit inches in front of your monitor. On consoles you sit 6ft+ away from an HDTV and the angular resolution of the human simply isn't good enough to easily see a difference between something like 900p upscaled and 1080p (for example). See RYSE for absolute proof of this fact.

There is a massive elephant in the room in graphics rendering that is exacerbated on consoles/tv's; resolution scales non-linearly

against performance but the visual payoff scales non-linearly

against human perception as well. In other words, throwing an extra 180 pixel lines on screen won't even be noticed by users at all, yet it costs you a TON in processing to get that ridiculously diminished return that nobody even notices.

What MS did was recognize this and give devs the ability to tightly manage framerates and resolutions and color depths in the display planes. They did extensive testing of this at MSR where they looked at display planes utilized in a best case scenario and saw that you waste 5-6 times the GPU processing by going full HD when you don't need to in most cases. Letting devs process foregrounds/backgrounds independently in the GPU and then using a really high end scaler for free AA and quality IQ means devs can get almost the exact same results as 1080p + good AA without the additional GPU processing taking part at all.

RYSE is a great example, as is DR3. In RYSE's case, the game gets free AA via the display planes by going to 900p and upscaling. At the same time performance gets a boost from this, which they offset by adding more dynamic bits to Marius' armor and more visual fx in general. So you get a better looking game in areas that ppl actually notice without any compromises to the end results as viewed on your tv to the naked eye. For DR3, they locked the framerate using the display planes via dynamic resolution that slightly fluctuates in non-notable ways, even with fully destructible zombies numbering well into the hundreds all on screen at once (plus huge open levels with lots of destructibility in them too). So there you get all of that without having to pare back the destruction or zombie count (gameplay stuff) AND you get your framerate locked down at 30fps. For free relative to the GPU. More control over graphics-related optimizations in areas of highly diminished returns is a GOOD thing.

And why do they continue to keep referring to what they've done as an increase? The 7790 was built to run with 14CUs/1Ghz@1.79TF. 12CUs/853mhz@1.31TF is a DECREASE, not an upclock. They could at least give the people they're talking to a little credit for being able to do basic math.

They were using an 800MHz GPU. You have to earn credit and trying to compare their setup to discrete chips isn't a good way to start.

I'm a little confused by what they were trying to say about bandwidth. Perhaps I've misunderstood but it sounds almost like they're "hoping" that their emphasis on bandwidth over CUs is going to pay off. They don't sound entirely convinced of it either as they kind of leave that up in the air.

For GPGPU stuff, both companies have different strategies. Sony has little experience with it compared to MS. Sony wants lots of CU's to help with GPGPU loads (physics for instance). In my view, this might be problematic as having CU's is worthless if the loads are stalled by pending fetch requests to memory. GDDR5 has lots of latency and for physics calculations you need low latency usually to avoid stalling your GPU/CU (hence physics is usually done on the CPU). That's MS's thoughts too, I think. In their setup you can use your CU's to access eSRAM which has very low latency

in addition to high bandwidth at the same time. So you can bring in lots of data at once to run in parallel and that data can change violently during the frame without causing stalls. That's just my interpretation of their position though. Might be wrong.

Both companies are guessing with their strategies here. I'd wager MS's experience (DirectCompute, Kinect) there puts them at an advantage of being right over Cerny's expectations though.