Some very interesting details from this presentation.

http://www.redgamingtech.com/ubisoft-gdc-presentation-of-ps4-x1-gpu-cpu-performance/

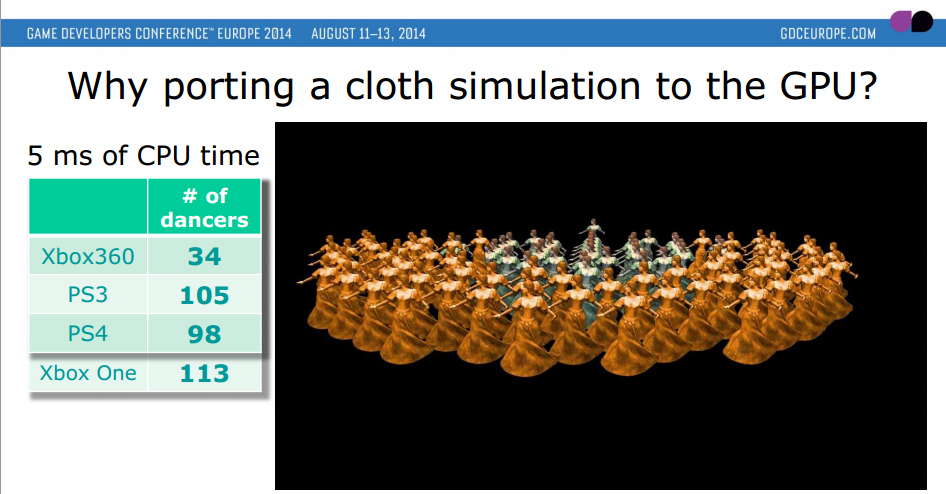

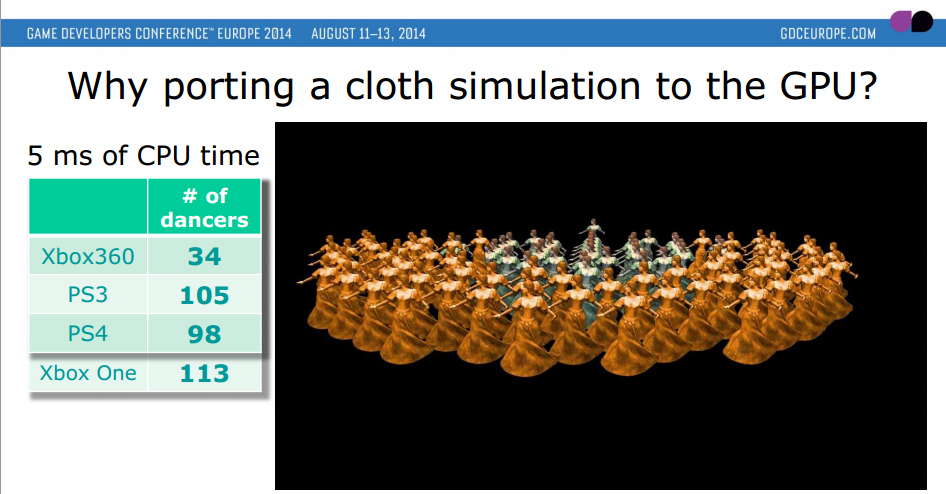

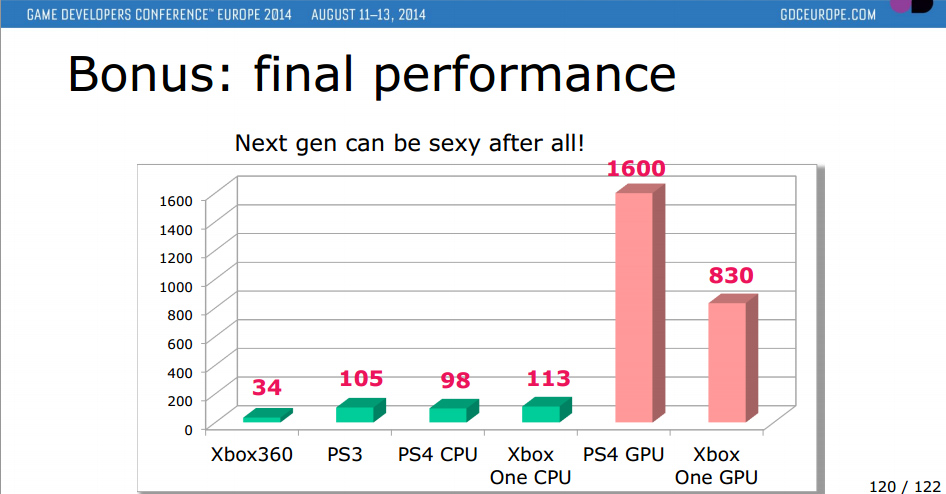

CPU

You’ll notice the Playstation 4′s CPU performs slightly inferior if compared to the Xbox One’s; clearly this difference can be attributed to the raw clock speed difference between the two machines. Microsoft, as you might recall, bumped up the clock speed of their CPU (using the AMD Jaguar, in the same configuration as Sony, that is four cores per module, two modules total) from 1.6GHZ to 1.75GHZ. Sony didn’t do this, and despite tons of speculation as to the actual clock speed of the PS4′s CPU clock frequency, it was eventually confirmed by Sony to have been left at 1.6GHZ, thus providing Microsoft a slight advantage.

RAM

If you’ve been following the next generation since the initial launch rumors, you might recall much being made of the memory bandwidth situation in both consoles. Despite Sony clearly having a bandwidth advantage. Despite both consoles using the same width of memory bus (256-bit), Sony opted to use GDDR5 running at 5500MHZ, instead of Microsoft’s slower DDR3 running at 2133MHZ. This leads to the Xbox one having about 68 GB/s of bandwidth (using purely DDR3) and the PS4 with 176GB/s.

This isn’t so bad if you consider the fact Microsoft’s console has 50 percent less shaders (but they do run at a slightly higher clock speed), which does slightly help lessen the bandwidth requirement. Aside from this, the Xbox One uses the rather infamous eSRAM to make up for the deficit. But, despite all of this, the Playstation 4′s memory bandwidth isn’t endless. We’ve found out of course that the PS4′s memory latency (which was suspected to be an issue because of a myth of GDDR5 RAM, won’t be an issue… see here for more info).

It’s more like the PS4′s memory bandwidth is roughly what you’d expect given the relative performance of its GPU.

Robert Hallock from AMD told us in an exclusive interview

“GPU design is analogous to the game engine design question you previously posed. Everything does have to be balanced. You could throw fistfuls of render backends at a GPU, but if your memory bandwidth is insufficient, then that hardware is wasted. And vice versa, of course.

I think it can best be explained by working backwards, asking yourself: “What performance and resolution target do I want to hit?” Then you build a core out on paper that, by your mathematical models, would yield performance roughly equivalent to your target. Then you build it!

For 512 shader Unitss, then I would say: 32 texture units, 16 ROPs and a 128-bit bus.” You can find out much more in our interview with AMD here.

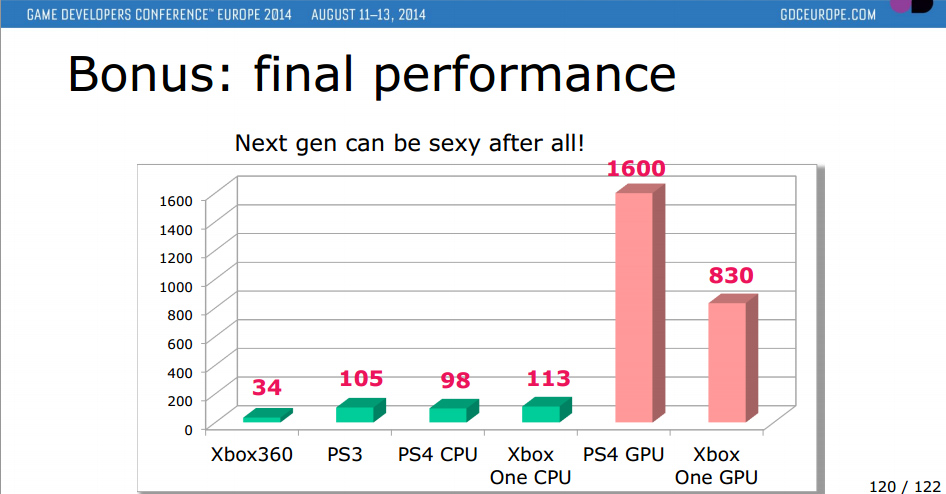

GPU

I’m sure the above is what you’re all waiting for – and it makes a lot of sense. But then, at the same time, the performance difference between the Xbox One’s GPU and Playstation 4′s GPU is actually slightly higher than what you might think. What Ubisoft do say is that “PS4 – 2 ms of GPU time – 640 dancers” – but no form of metric for the Xbox One, which is a bit of a shame. It’s clear however that for the benchmarking Ubisoft have used here, the PS4′s GPU is virtually double the speed.

With a little bit of guess work, it’s likely down to a few reasons. The first being the pure shader power. We’re left with 1.84 TFLOPS vs 1.32 TFLOPS. The second, the memory bandwidth equation, and the third – the more robust compute structure of the Playstation 4. The additional ACE buried inside the PS4′s GPU do help out a lot with task scheduling, and generally speed up the performance of the PS4′s compute / graphics commands which are issued to the shaders. Mostly the reason behind the improvement in ACE is Sony (so the story goes) knew the future of this generation of consoles was compute, and requested some changes to the GPU, thus there are many similarities to the PS4′s GPU and Volcanic Islands from AMD.

Another possibility for improvement is the PS4′s robust bus structure, or finally the so called ‘Volatile Bit’. Either way, it’s clearly a performance win in the GPU side for Sony. So parity in some ways could either be a PR effort, or in some cases, perhaps the relatively weak CPU in both machines is holding everything back.

It’s unfortunate Ubisoft were rather quiet on the Xbox One front – we do have a little information, for example Microsoft and AMD’s Developer Day Conference (Analysis here) but other than that, we’re left with precious little info. It remains to be seen how much DX12 will change the formula. But for right now, gamer’s are still going to want answers for the reasons of ‘parity’.

http://www.redgamingtech.com/ubisoft-gdc-presentation-of-ps4-x1-gpu-cpu-performance/

CPU

You’ll notice the Playstation 4′s CPU performs slightly inferior if compared to the Xbox One’s; clearly this difference can be attributed to the raw clock speed difference between the two machines. Microsoft, as you might recall, bumped up the clock speed of their CPU (using the AMD Jaguar, in the same configuration as Sony, that is four cores per module, two modules total) from 1.6GHZ to 1.75GHZ. Sony didn’t do this, and despite tons of speculation as to the actual clock speed of the PS4′s CPU clock frequency, it was eventually confirmed by Sony to have been left at 1.6GHZ, thus providing Microsoft a slight advantage.

RAM

If you’ve been following the next generation since the initial launch rumors, you might recall much being made of the memory bandwidth situation in both consoles. Despite Sony clearly having a bandwidth advantage. Despite both consoles using the same width of memory bus (256-bit), Sony opted to use GDDR5 running at 5500MHZ, instead of Microsoft’s slower DDR3 running at 2133MHZ. This leads to the Xbox one having about 68 GB/s of bandwidth (using purely DDR3) and the PS4 with 176GB/s.

This isn’t so bad if you consider the fact Microsoft’s console has 50 percent less shaders (but they do run at a slightly higher clock speed), which does slightly help lessen the bandwidth requirement. Aside from this, the Xbox One uses the rather infamous eSRAM to make up for the deficit. But, despite all of this, the Playstation 4′s memory bandwidth isn’t endless. We’ve found out of course that the PS4′s memory latency (which was suspected to be an issue because of a myth of GDDR5 RAM, won’t be an issue… see here for more info).

It’s more like the PS4′s memory bandwidth is roughly what you’d expect given the relative performance of its GPU.

Robert Hallock from AMD told us in an exclusive interview

“GPU design is analogous to the game engine design question you previously posed. Everything does have to be balanced. You could throw fistfuls of render backends at a GPU, but if your memory bandwidth is insufficient, then that hardware is wasted. And vice versa, of course.

I think it can best be explained by working backwards, asking yourself: “What performance and resolution target do I want to hit?” Then you build a core out on paper that, by your mathematical models, would yield performance roughly equivalent to your target. Then you build it!

For 512 shader Unitss, then I would say: 32 texture units, 16 ROPs and a 128-bit bus.” You can find out much more in our interview with AMD here.

GPU

I’m sure the above is what you’re all waiting for – and it makes a lot of sense. But then, at the same time, the performance difference between the Xbox One’s GPU and Playstation 4′s GPU is actually slightly higher than what you might think. What Ubisoft do say is that “PS4 – 2 ms of GPU time – 640 dancers” – but no form of metric for the Xbox One, which is a bit of a shame. It’s clear however that for the benchmarking Ubisoft have used here, the PS4′s GPU is virtually double the speed.

With a little bit of guess work, it’s likely down to a few reasons. The first being the pure shader power. We’re left with 1.84 TFLOPS vs 1.32 TFLOPS. The second, the memory bandwidth equation, and the third – the more robust compute structure of the Playstation 4. The additional ACE buried inside the PS4′s GPU do help out a lot with task scheduling, and generally speed up the performance of the PS4′s compute / graphics commands which are issued to the shaders. Mostly the reason behind the improvement in ACE is Sony (so the story goes) knew the future of this generation of consoles was compute, and requested some changes to the GPU, thus there are many similarities to the PS4′s GPU and Volcanic Islands from AMD.

Another possibility for improvement is the PS4′s robust bus structure, or finally the so called ‘Volatile Bit’. Either way, it’s clearly a performance win in the GPU side for Sony. So parity in some ways could either be a PR effort, or in some cases, perhaps the relatively weak CPU in both machines is holding everything back.

It’s unfortunate Ubisoft were rather quiet on the Xbox One front – we do have a little information, for example Microsoft and AMD’s Developer Day Conference (Analysis here) but other than that, we’re left with precious little info. It remains to be seen how much DX12 will change the formula. But for right now, gamer’s are still going to want answers for the reasons of ‘parity’.